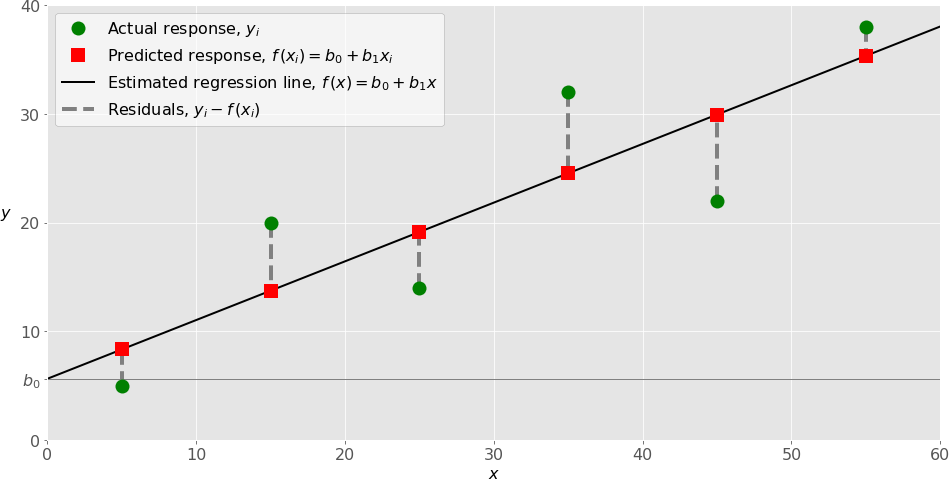

If we compute the correlation between Y and Y' we find that R=.82, which when squared is also an R-square of. Together, the variance of regression (Y') and the variance of error (e) add up to the variance of Y (1.57 = 1.05+.52). The variance of Y' is 1.05, and the variance of the residuals is. The mean of Y is 3.25 and so is the mean of Y'. We can also compute the correlation between Y and Y' and square that. We use a capital R to show that it's a multiple R instead of a single variable r. When we run a multiple regression, we can compute the proportion of variance due to the regression (the set of independent variables considered together). In multiple regression, the linear part has more than one X variable associated with it. Just as in simple regression, the dependent variable is thought of as a linear part and an error. A still view of the Chevy mechanics' predicted scores produced by Plotly: R-square (R 2) This lets you see the response surface more clearly. The plotly package in R will let you 'grab' the 3 dimensional graph and rotate it with your computer mouse. The linear regression solution to this problem in this dimensionality is a plane. What is the expected height (Z) at each value of X and Y? An example animation is shown at the very top of this page (rotating figure). This graph doesn't show it very well, but the regression problem can be thought of as a sort of response surface problem. We can (sort of) view the plot in 3D space, where the two predictors are the X and Y axes, and the Z axis is the criterion, thus: We have 3 variables, so we have 3 scatterplots that show their relations.īecause we have computed the regression equation, we can also view a plot of Y' vs.

Job Perf' = -4.10 +.09MechApt +.09Coscientiousness.

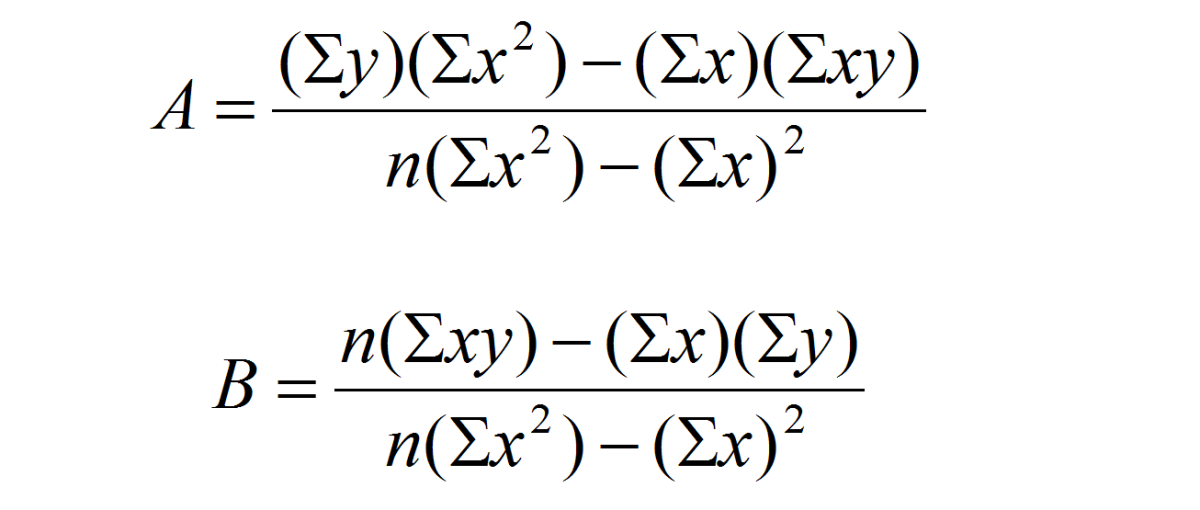

We can now compute the regression coefficients: The numbers in the table above correspond to the following sums of squares, cross products, and correlations: We can collect the data into a matrix like this: (In practice, we would need many more people, but I wanted to fit this on a PowerPoint slide.) Suppose we want to predict job performance of Chevy mechanics based on mechanical aptitude test scores and test scores from personality test that measures conscientiousness. This equation is a straight-forward generalization of the case for one independent variable. The equation for a with two independent variables is: Also note that a term corresponding to the covariance of X1 and X2 (sum of deviation cross-products) also appears in the formula for the slope. Note that terms corresponding to the variance of both X variables occur in the slopes. For example, X 2 appears in the equation for b 1. In the two variable case, the other X variable also appears in the equation. If you understand the meaning of the slopes with two independent variables, you will likely be good no matter how many you have.įor the one variable case, the calculation of b and a was:Īt this point, you should notice that all the terms from the one variable case appear in the two variable case. But the basic ideas are the same no matter how many independent variables you have. It's simpler for k=2 IVs, which we will discuss here. The prediction equation is:įinding the values of b (the slopes) is tricky for k>2 independent variables, and you really need matrix algebra to see the computations. Again we want to choose the estimates of a and b so as to minimize the sum of squared errors of prediction. We still have one error and one intercept. Note that we have k independent variables and a slope for each. We can extend this to any number of independent variables: Where Y is an observed score on the dependent variable, a is the intercept, b is the slope, X is the observed score on the independent variable, and e is an error or residual. With one independent variable, we may write the regression equation as: How is it possible to have a significant R-square and non-significant b weights? The Regression Line What are the three factors that influence the standard error of the b weight? Write a regression equation with beta weights in it. Why do we report beta weights (standardized b weights)? What happens to b weights if we add new variables to the regression equation that are highly correlated with ones already in the equation? multiple regression?ĭescribe R-square in two different ways, that is, using two distinct formulas. What is the difference in interpretation of b weights in simple regression vs. Write a raw score regression equation with 2 ivs in it. Regression with Two Independent Variables Questions

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed